Are you interested in taking your machine learning projects to the next level? Look no further than AWS’s MLOps services! These powerful tools can help streamline your machine learning workflows and improve your overall efficiency. In this article, we’ll explore some of the key MLOps services offered by AWS and how they can benefit your projects.

Introduction to MLOps

Before we dive into specifics, let’s first define what MLOps is. MLOps, short for “machine learning operations,” is the practice of applying DevOps principles to machine learning workflows. This involves using automation and collaboration tools to streamline the development, deployment, and maintenance of machine learning models.

MLOps can help organizations improve their machine learning workflows in a number of ways, including:

- Streamlining the development process

- Improving model accuracy and performance

- Reducing time to deployment

- Improving collaboration between data scientists and IT teams

- Enhancing model monitoring and maintenance

Now that we have a basic understanding of MLOps, let’s explore some of the MLOps services offered by AWS.

Amazon SageMaker

Amazon SageMaker is a fully-managed service that provides developers and data scientists with tools to build, train, and deploy machine learning models. SageMaker includes a wide range of features, including:

- Built-in algorithms for common machine learning tasks

- Integration with popular machine learning frameworks like TensorFlow and PyTorch

- Automated model tuning to help optimize model performance

- One-click deployment to easily deploy models to production

- Automatic scaling to handle large datasets and models

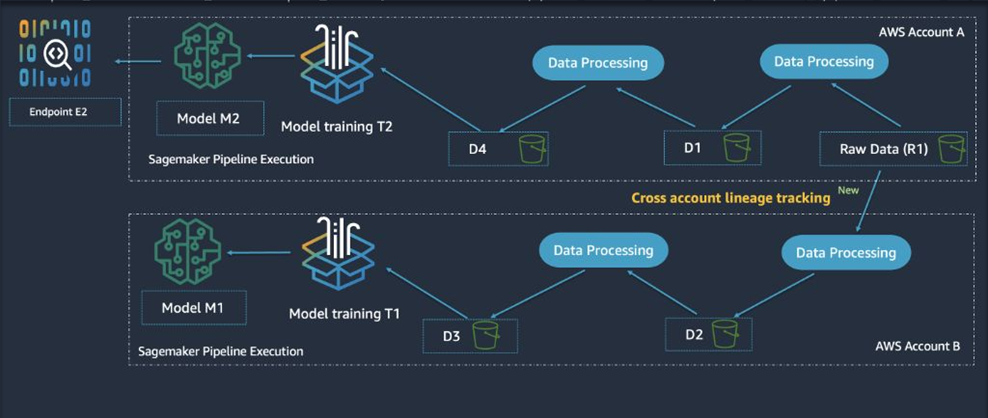

SageMaker also integrates with other AWS services, such as AWS Lambda and AWS Glue, to provide a comprehensive MLOps solution.

Amazon S3

Amazon S3, short for “Simple Storage Service,” is a highly-scalable cloud storage service that can be used to store and manage large datasets. S3 is designed to be highly available and durable, with built-in redundancy and automatic replication to ensure data integrity.

S3 can be used as a central repository for all your machine learning data, including training data, model artifacts, and performance metrics. This makes it easy to share data across teams and to scale up your machine learning workflows as needed.

AWS Lambda

AWS Lambda is a serverless compute service that allows you to run code without provisioning or managing servers. With Lambda, you can run your machine learning workflows in response to events, such as the arrival of new data or the completion of a model training job.

Lambda supports a wide range of programming languages, including Python, Java, and Node.js, and can be easily integrated with other AWS services like S3 and SageMaker. This makes it easy to build serverless MLOps workflows that scale automatically to handle your workload.

Amazon CloudWatch

Amazon CloudWatch is a monitoring and management service that provides visibility into your AWS resources and applications. CloudWatch can be used to monitor your machine learning models in real-time, including metrics like model accuracy, prediction latency, and resource utilization.

With CloudWatch, you can set up alarms to notify you when certain thresholds are exceeded, such as when model accuracy drops below a certain threshold or when resource utilization exceeds a certain limit. This can help you proactively identify and resolve issues before they impact your business.

Conclusion

In conclusion, AWS offers a wide range of MLOps services that can help streamline your machine learning workflows and improve your overall efficiency. Whether you’re just getting started with machine learning or you’re looking to take your existing projects to the next level, AWS has the tools you need to succeed. So why wait? Start exploring AWS’s MLOps services today!