Are you tired of manually deploying and managing your machine learning models in production? Do you want to streamline your operations and increase efficiency? Look no further than MLOps!

MLOps, short for Machine Learning Operations, is a set of practices and tools that integrate machine learning into the software development and deployment process. It combines traditional software development practices with machine learning techniques to automate the deployment, monitoring, and management of machine learning models in production.

In this article, we’ll explore how Site Reliability Engineers (SREs) can leverage MLOps to optimize their operations and ensure the reliability of their machine learning models. But first, let’s start with some basics.

What is SRE?

SRE, short for Site Reliability Engineering, is a discipline that combines software engineering and operations to improve the reliability, scalability, and performance of complex systems. SREs are responsible for designing, building, and maintaining the infrastructure and services that power modern web applications and services.

SREs face a unique set of challenges when dealing with machine learning models. Unlike traditional software applications, machine learning models are data-driven and require constant updates and maintenance. Moreover, they often operate in real-time and must be highly available and scalable to handle large volumes of data.

What is MLOps?

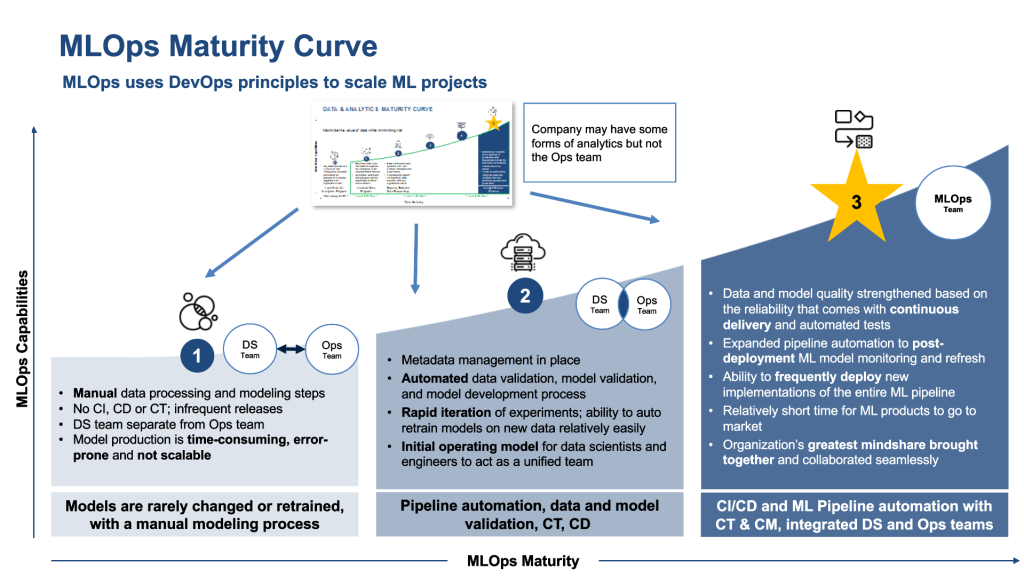

MLOps is a set of practices and tools that enable organizations to build, deploy, and manage machine learning models in production. It involves the integration of machine learning models into the software development and deployment process, using automation and monitoring to ensure the reliability and scalability of the models.

MLOps comprises several key components, including:

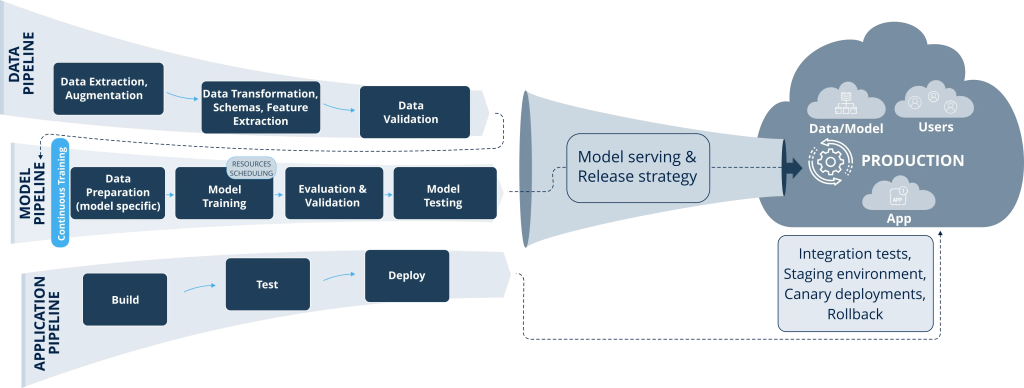

- Data management: Collecting, cleaning, and storing data for training and inference.

- Model development: Building and testing machine learning models using frameworks like TensorFlow or PyTorch.

- Model deployment: Deploying machine learning models to production environments using containerization and orchestration tools like Docker and Kubernetes.

- Model monitoring: Monitoring the performance and accuracy of machine learning models in production, using metrics and alerts to detect and diagnose issues.

- Model retraining: Updating machine learning models with new data and features to improve accuracy and performance over time.

How Can SREs Use MLOps?

SREs can use MLOps to streamline their operations and improve the reliability of their machine learning models. Here are some key ways that SREs can leverage MLOps:

1. Automate Deployment and Scaling

By using containerization and orchestration tools like Docker and Kubernetes, SREs can automate the deployment and scaling of machine learning models in production environments. This reduces the manual effort required to manage and deploy models, freeing up SREs to focus on more critical tasks.

2. Monitor Model Performance

SREs can use metrics and alerts to monitor the performance and accuracy of machine learning models in production. This helps them detect issues early and diagnose problems before they become critical. By using machine learning to predict and prevent issues, SREs can improve the reliability and availability of their models.

3. Train Models Continuously

Machine learning models require continuous training and updating to maintain accuracy and performance over time. By using MLOps tools like Kubeflow or MLflow, SREs can automate the process of retraining models with new data and features. This ensures that models are always up-to-date and accurate, reducing the risk of errors and downtime.

4. Collaborate Across Teams

MLOps requires collaboration across teams, including data scientists, software engineers, and operations teams. By using MLOps tools like Git or Jupyter notebooks, SREs can collaborate and share code and data with other teams. This improves communication and reduces the risk of errors and misunderstandings.

Conclusion

MLOps is a powerful set of practices and tools that can help SREs optimize their operations and ensure the reliability of their machine learning models. By automating deployment and scaling, monitoring model performance, training models continuously, and collaborating across teams, SREs can improve the reliability, scalability, and performance of their machine learning models. So why wait? Start using MLOps today and take your operations to the next level!