Have you ever wondered what DataOps does? Well, let me tell you, it’s a fascinating field that’s growing in importance every day. In this article, we’ll explore what DataOps is, what it entails, and why it’s crucial in today’s data-driven world.

Introduction

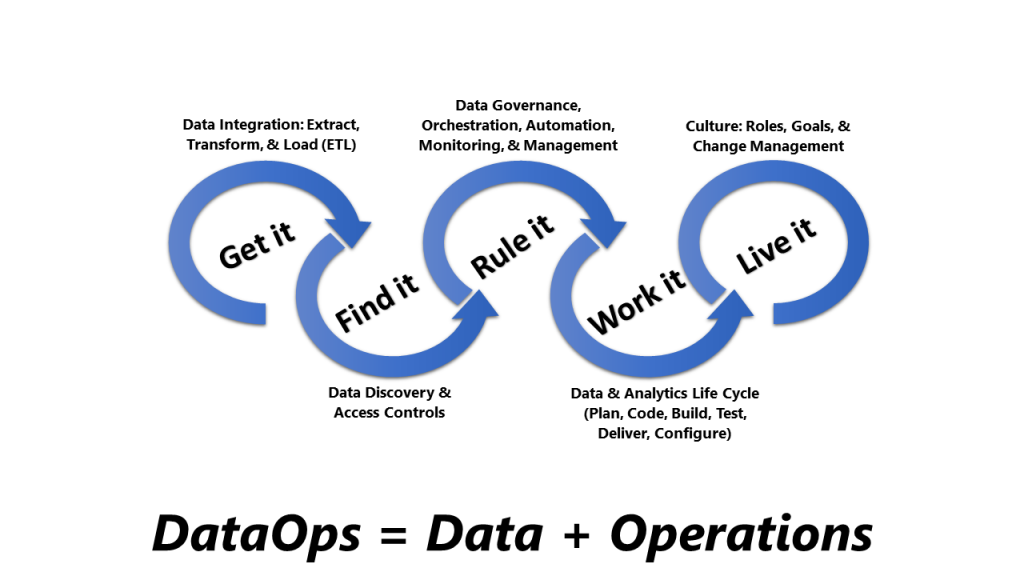

DataOps is a relatively new term that refers to the practice of integrating data engineering, data quality, and data security into the DevOps process. It’s a methodology that aims to streamline the data pipeline, from data ingestion to data consumption, by bringing together different teams and tools to work in tandem.

What is DataOps?

DataOps is a collaborative approach to managing data that involves continuous integration and delivery, automated testing, and agile development practices. It’s a methodology that emphasizes the importance of data quality, security, and governance throughout the entire data lifecycle.

The Benefits of DataOps

DataOps offers many benefits, including improved data quality, faster time-to-market, reduced costs, and increased collaboration between teams. By bringing together different teams and tools, DataOps can help organizations streamline their data pipeline, reduce errors, and improve the overall efficiency of their data operations.

How DataOps Works

DataOps works by integrating different tools and processes into a single, cohesive pipeline. It involves a combination of automation, collaboration, and agile development practices to ensure that data is processed quickly and accurately.

The Key Components of DataOps

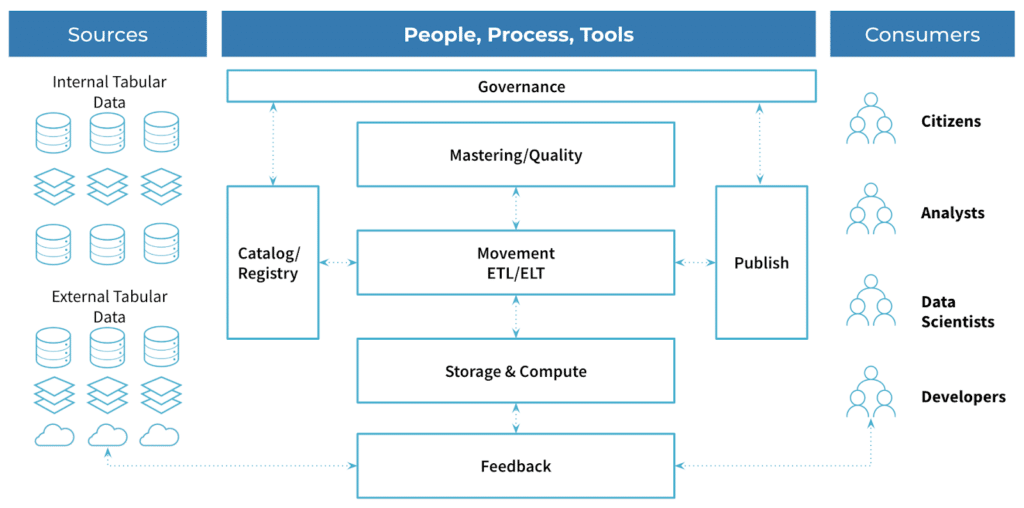

There are several key components of DataOps, including data engineering, data quality, data security, and data governance. These components work together to ensure that data is processed efficiently and accurately, while also maintaining its integrity and security.

Data Engineering

Data engineering is the process of designing, building, and maintaining the data infrastructure that supports an organization’s data pipeline. It involves creating and managing data pipelines, data warehouses, and data lakes, as well as developing and maintaining data integration and ETL (Extract, Transform, Load) processes.

Data Quality

Data quality is the process of ensuring that data is accurate, complete, and consistent. It involves developing and implementing data quality rules, performing data profiling and data cleansing, and monitoring data quality metrics.

Data Security

Data security is the process of protecting data from unauthorized access, use, disclosure, disruption, modification, or destruction. It involves implementing security controls, such as access controls, encryption, and data masking, to ensure that data is protected from both internal and external threats.

Data Governance

Data governance is the process of managing the availability, usability, integrity, and security of the data used in an organization. It involves developing and implementing data policies, standards, and guidelines, as well as monitoring and enforcing compliance with these policies.

Why DataOps is Important

DataOps is important because it helps organizations to manage their data more efficiently and effectively. By bringing together different teams and tools, DataOps can help organizations to streamline their data pipeline, reduce errors, and improve the overall efficiency of their data operations.

Conclusion

In conclusion, DataOps is a methodology that aims to streamline the data pipeline by integrating different teams and tools into a single, cohesive pipeline. It involves a combination of automation, collaboration, and agile development practices to ensure that data is processed quickly and accurately. By implementing DataOps, organizations can improve their data quality, reduce costs, and increase collaboration between teams.