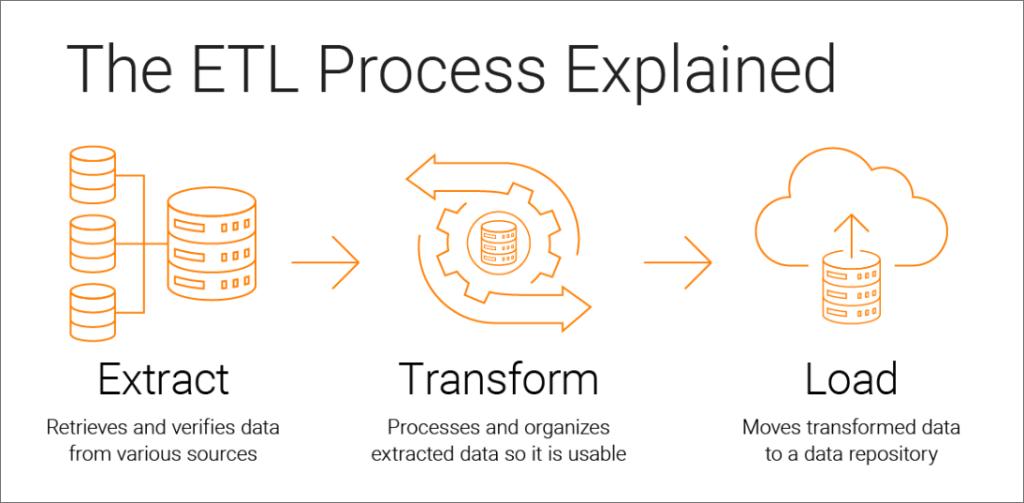

ETL (Extract, Transform, Load) tools are software solutions designed to facilitate the process of data integration and data warehousing. They enable organizations to extract data from various sources, transform and manipulate it to fit desired formats or structures, and load it into a target data warehouse, data lake, or database for analysis, reporting, and business intelligence purposes.

Key Components of ETL Tools:

- Extract: The extract phase involves gathering data from different source systems, which can be databases, flat files, web services, cloud applications, APIs, or other data repositories.

- Transform: During the transform phase, the data is processed, cleaned, validated, and transformed into a consistent format suitable for analysis and reporting. This can include data cleansing, data enrichment, data aggregation, and data normalization.

- Load: In the load phase, the transformed data is loaded into the target data warehouse, data lake, or database. This is where the data is stored for querying and analysis by end-users.

Key Features of ETL Tools:

- Data Connectivity: ETL tools provide connectors and adapters to connect to various data sources and extract data from them. They support a wide range of data formats and protocols to ensure seamless data integration.

- Data Transformation: ETL tools offer a variety of data transformation functions and operations to clean, enrich, and manipulate data as needed during the ETL process.

- Workflow Automation: ETL tools allow users to create and manage ETL workflows or data pipelines, automating the ETL process and reducing manual intervention.

- Scalability and Performance: ETL tools are designed to handle large volumes of data efficiently. They optimize data processing to achieve high performance during data extraction, transformation, and loading.

- Data Quality and Validation: ETL tools often include data quality and validation features to ensure that data is accurate, complete, and consistent before loading it into the target destination.

- Error Handling and Logging: ETL tools provide error handling mechanisms and logging capabilities to track and manage any data errors or issues that may occur during the ETL process.

- Scheduling and Monitoring: ETL tools support job scheduling and monitoring, allowing users to set up automated data extraction and transformation schedules and monitor the progress and status of ETL jobs.

Popular ETL Tools:

- Apache NiFi

- Talend

- Informatica

- IBM InfoSphere DataStage

- Microsoft SQL Server Integration Services (SSIS)

- AWS Glue

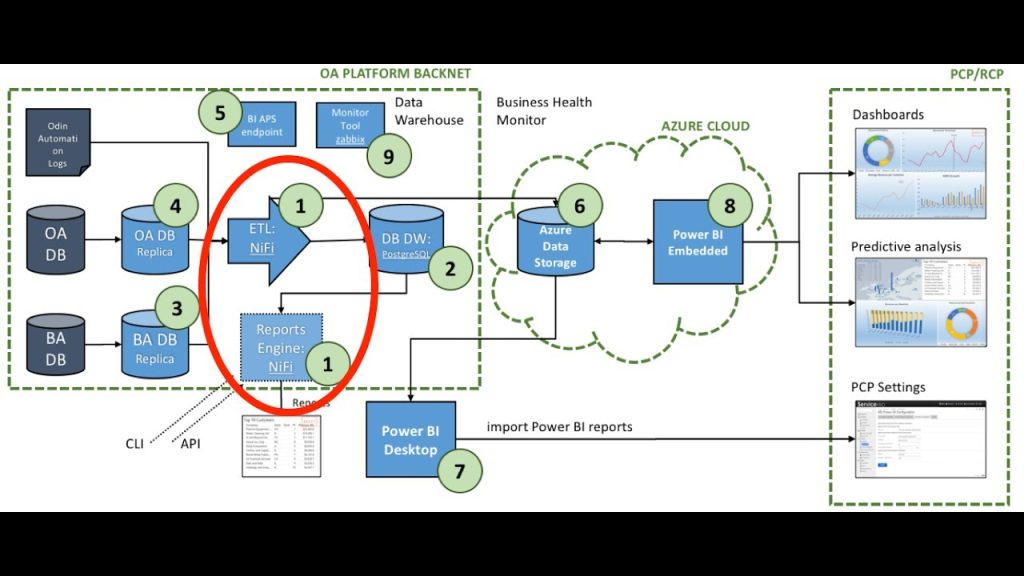

1. Apache NiFi

Apache NiFi is an open-source data integration tool that provides powerful capabilities for Extract, Transform, Load (ETL) operations. It is part of the Apache Software Foundation and is designed to automate the flow of data between different systems in real-time. NiFi is built on a scalable and extensible architecture, making it suitable for processing and transforming data from various sources and delivering it to different destinations.

Key Features of Apache NiFi for ETL:

- Data Integration: Apache NiFi supports data integration from various sources, including databases, files, web services, IoT devices, and cloud-based platforms.

- Data Flow Automation: NiFi allows users to create data flows (also known as data pipelines) visually using a web-based graphical user interface (GUI). These data flows can be easily modified and extended as per the data processing requirements.

- Data Transformation: NiFi provides powerful data transformation capabilities, allowing users to manipulate, cleanse, and enrich data as it flows through the data pipeline.

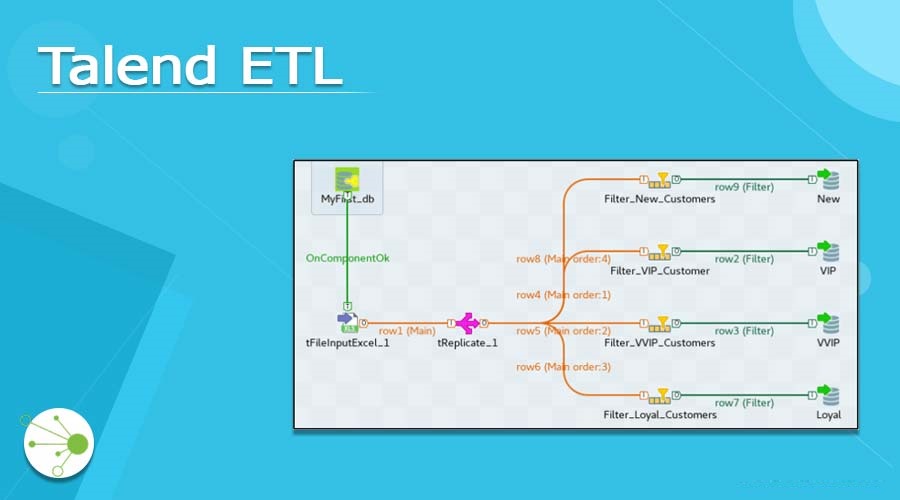

2. Talend

Talend is a widely used open-source data integration and ETL (Extract, Transform, Load) tool that facilitates data integration, data transformation, and data management tasks. Talend provides a comprehensive platform that enables organizations to connect, access, and process data from various sources, transforming it into meaningful and actionable insights.

Key Features of Talend for ETL:

- Data Connectivity: Talend supports a vast range of data connectors and adapters, allowing users to connect to different data sources, including databases, cloud applications, web services, big data platforms, and more.

- Data Transformation: Talend provides a powerful data transformation engine with a drag-and-drop interface, making it easy for users to design and execute complex data transformation processes.

- Job Design: Talend allows users to design data integration jobs visually using a graphical development environment, reducing the need for coding and enabling faster development.

3. Informatica

Informatica is a leading data integration and ETL (Extract, Transform, Load) tool that empowers organizations to connect, transform, and manage data from various sources. It offers a comprehensive data integration platform that ensures data quality, supports data governance, and enables efficient data processing for business intelligence and analytics.

Key Features of Informatica for ETL:

- Big Data Integration: Informatica provides integration with big data technologies, including Apache Hadoop and Apache Spark, enabling organizations to process and analyze big data alongside traditional data sources.

- Data Masking: Informatica includes data masking capabilities to protect sensitive data by obfuscating or anonymizing the information.

- Master Data Management (MDM): Informatica offers Master Data Management solutions for managing and consolidating master data across the enterprise.

4. IBM InfoSphere DataStage

IBM InfoSphere DataStage is an ETL (Extract, Transform, Load) tool provided by IBM that enables organizations to extract, transform, and load data from various sources into a target data warehouse or data mart. It is part of the IBM InfoSphere Information Server suite, which includes other data integration and data management products.

Key Features of IBM InfoSphere DataStage for ETL:

- Job Orchestration: DataStage allows users to create data integration jobs visually using a graphical development environment. It provides job orchestration and workflow management to automate and schedule data integration tasks.

- Data Quality: InfoSphere DataStage includes data quality features, such as data profiling, data standardization, data cleansing, and data validation, to ensure data accuracy and consistency.

- Data Governance: DataStage supports data governance initiatives by providing features for data lineage, data auditing, data versioning, and access controls, ensuring compliance and data security.

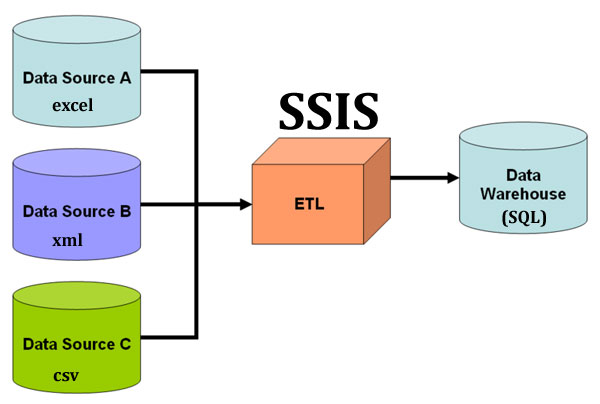

5. Microsoft SQL Server Integration Services (SSIS)

Microsoft SQL Server Integration Services (SSIS) is a powerful ETL (Extract, Transform, Load) tool provided by Microsoft as a part of SQL Server, a popular relational database management system. SSIS enables users to perform data integration, data transformation, and data loading tasks to facilitate the extraction and movement of data between different data sources and destinations.

Key Features of Microsoft SQL Server Integration Services (SSIS) for ETL:

- Workflow Automation: SSIS allows users to create data integration workflows or packages visually using the SSIS Designer. These packages can be scheduled and automated using SQL Server Agent or other scheduling tools.

- Error Handling and Logging: SSIS provides error handling and logging features, allowing users to capture errors, redirect error rows, and log information for troubleshooting and auditing purposes.

- Data Quality: SSIS includes data quality features, such as data profiling and data cleansing, to ensure that data is accurate, consistent, and compliant with business rules.

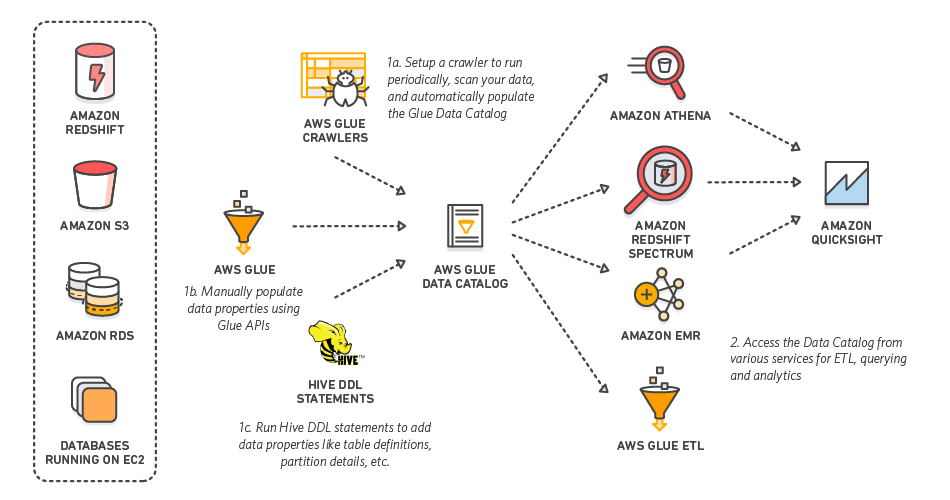

6. AWS Glue

AWS Glue is a fully managed ETL (Extract, Transform, Load) service provided by Amazon Web Services (AWS). It allows users to discover, catalog, and transform data from various sources for analysis, reporting, and data warehousing. AWS Glue automates much of the ETL process, making it easier and more efficient to prepare and move data into AWS data storage and analytics services.

Key Features of AWS Glue for ETL:

- Dynamic Scaling: AWS Glue automatically provisions the required compute resources for ETL jobs based on the data processing needs. It can scale up or down to handle large volumes of data efficiently.

- Job Scheduling and Orchestration: AWS Glue allows users to schedule ETL jobs to run at specified intervals or in response to data arrival. It also supports job orchestration to create complex workflows involving multiple ETL jobs.

- Data Quality and Validation: AWS Glue provides data quality features, such as data profiling and data validation, to ensure data accuracy and consistency.

- Integration with AWS Services: AWS Glue seamlessly integrates with other AWS services, including Amazon S3, Amazon Redshift, Amazon RDS, and Amazon Aurora, making it easy to move and process data within the AWS ecosystem.